High-Performance Computer Cluster Overview

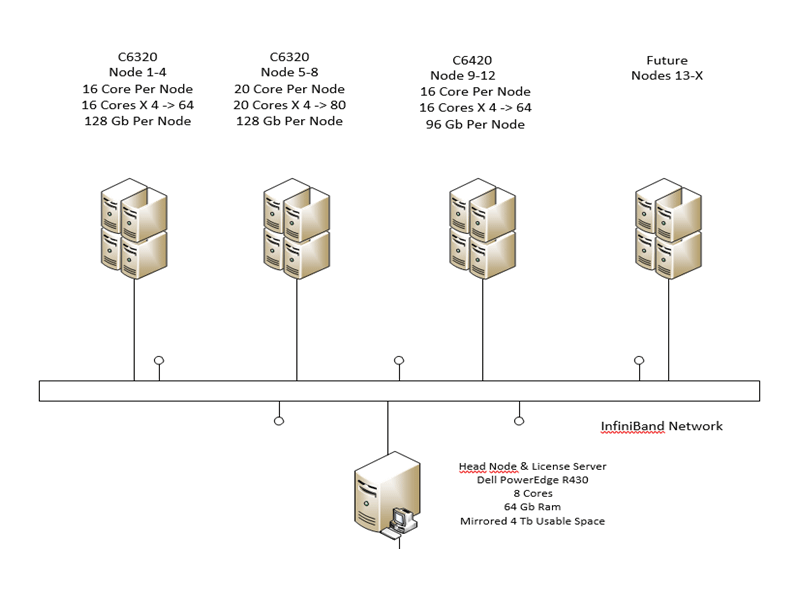

Topology

The CoE-HPC cluster currently has:

- Head node with 12 cores

- 8 servers with 16 cores each

- 4 servers with 20 cores each.

- 208 total computational cores in the cluster

- Total RAM of 8x128GB or 1024GB (not including the head node)

Allocations

Each user receives a default allocation with the following directories:

- $HOME directory: the home directory can be used to host critical files like software applications. It is backed up to a remote location. HOME directories are private to each user.

- data: the ‘“data” file system is backed up to a remote location.

- work: a group directory where members of a research group can easily share files. Not backed up.

- scratch: a local directory for each user. All data on this directory is private to the user. Not backed up.

Job Submission

The CoE-HPC uses SLURM (Simple Linux Universal Resource Manager) to manage resource scheduling and job submission. SLURM uses “partitions” to divide types of jobs.

Partition |

Time limit (hours) |

Number of nodes |

Default/Max |

Max memory per node (GB) |

Debug |

2:00:00 |

001-004 |

1/16 |

128 |

defq* |

unlimited |

001-004 |

1/16 |

128 |

Shared |

unlimited |

005-008 |

1/20 |

128 |

Fast |

unlimited |

009-012 |

1/16 |

96 |

Software

- LAMMPS

- Quantum Espresso

- VASP

- MATLAB

- Akantu

- Variety of in-house codes

- ANSYS (available soon)

- Abaqus (available soon)TensorFlow (available soon)

Acknowledgement in Publications

All users of the computational resources of the CoE-HPC cluster for their research will provide an acknowledgement statement in their publications. Any of the following three options, or variations therein, are allowed:

- Option 1: “This work used the High-Performance Computing cluster of the College of Engineering at Villanova University.”

- Option 2: “Computer time allocations at Villanova’s HPC-CoE cluster are acknowledged.”

- Option 3: “All simulations were run on the High-Performance Computing cluster of the College of Engineering at Villanova University.”